The Two Regimes of Computation

Classical computer science grew up in what Dr. White terms a High Validation Environment. A program ran on a single CPU, with a deterministic operating system, in a controlled laboratory. Inputs were bounded, outputs were reproducible, and correctness was verifiable by direct re-execution. Mathematics thrived in this environment because the environment was, itself, mathematical: every relevant variable could be named, and every relevant transformation could be logged.

Modern infrastructure inverts every one of these assumptions. A typical production deployment in 2026 spans multiple hyperscaler regions, runs across virtualized hardware with shifting physical topology, includes autonomous agents making decisions without human oversight, and accepts inputs from untrusted peers over high-latency links. The variables are unbounded. The outputs are not reproducible. Verification by re-execution is impossible because the environment that produced the original output no longer exists.

The Loss of Reproducible Proof

In Low Validation Environments, mathematical equations cannot outsource reliable context because the sheer complexity of variables ensures the math loses its grip entirely. Consider a Zero Knowledge Proof (ZKP) generated on a Kubernetes pod and verified on a mobile device: the prover's hardware, the verifier's runtime, the network path, and the timing of every intermediate hop all influence whether the proof reaches its destination in a verifiable state. Classical ZKP assumes a static verifier with static computational resources. Low Validation breaks that assumption.

What Low Validation Looks Like in Practice

Low Validation is not a single failure mode; it is a family of related breakdowns. Operators encountering the following symptoms are typically operating in low-validation regimes whether they realize it or not:

- Non-reproducible security incidents. A vulnerability is observed once, investigated thoroughly, and cannot be reproduced — because the environment that produced it has rotated out.

- Oracle disagreement. Two independent oracles, fed identical inputs, produce divergent attestations because their underlying entropy sources have drifted.

- Intermittent key-derivation failures. KDFs return entropy estimates that fluctuate wildly depending on workload placement.

- Cross-region state divergence. Replicas in different regions produce cryptographic outputs that should be identical but are not, and no audit log can conclusively explain the divergence.

Each of these is a symptom of the same underlying condition: the cryptographic primitives are depending on validation guarantees the environment cannot provide.

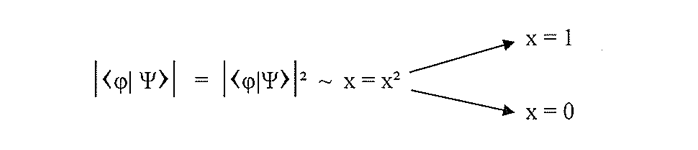

Non-Mathematical ZKP and the Trusted Anchor

When ZKP operates here, it must become non-mathematical. Rather than rigorous equation sequences, it must rely on absolute physical constants — a Trusted Anchor. The Trusted Anchor is not a bigger proof; it is a reference frame. It exists outside the formal system of the validator and the prover, and both can reference it without requiring the validation of the other.

ATOFIA utilizes the chaotic realities of thermodynamic physics to operate safely outside the boundaries of mathematical reproducibility. By relying on uncompensated thermodynamic thresholds, networks stay secure regardless of their environment validation density. The same mixing protocol running in a laboratory and running on a far-edge satellite produces outputs drawn from the same physical substrate — not because the protocol is deterministic, but because physics is universal.

"A Trusted Anchor must be a reference frame that exists outside the formal systems of every participant. Mathematics cannot provide this, because mathematics is itself a formal system. Only physics can." — Dr. Thurman Richard White, ATOFIA

Implications for Zero Trust Architectures

Every Zero Trust architecture is, by definition, operating in a Low Validation Environment. The whole point of Zero Trust is that no participant is authoritative; every transaction must be independently verifiable. But when the validators are themselves running in low-validation conditions, "independent verification" becomes a polite fiction. The validators cannot reliably agree on what they are seeing.

A physical Trusted Anchor resolves this by providing a reference every validator can read from but none can author. If the anchor says "the entropy stream at timestamp T was this microstate," no validator needs to trust any other validator to agree. They all read the same physical substrate.

Comparison with FIPS 140-3 Validation Thresholds

Regulatory frameworks like FIPS 140-3 implicitly assume High Validation conditions when they prescribe self-test behavior, entropy source characterization, and boundary definitions. A module operating across multiple cloud regions on rotating hardware cannot always satisfy those assumptions. The thermodynamic anchor provides a validation-independent reference that satisfies the spirit of the framework — reproducible, testable entropy — without requiring the infrastructure assumptions the letter of the framework was written for.

The Validation Spectrum

It is useful to think of validation as a continuous spectrum rather than a binary condition. At the extreme high end is a research laboratory with a single well-characterized instrument producing identical results on every run. At the extreme low end is a swarm of autonomous agents operating independently across adversarial networks with no central coordinator. Most production infrastructure sits somewhere between these extremes, and its position on the spectrum changes hour by hour as traffic, workload, and failure conditions evolve.

Classical cryptographic design assumes a single, fixed position on this spectrum — usually near the high end, where formal proofs apply. Real infrastructure requires a design that holds up across the entire spectrum, including during incidents when validation temporarily drops to near-zero. A thermodynamic anchor is spectrum-invariant: it does not care what the validation density of the surrounding environment is, because it is not participating in any validation exchange. It is simply a physical reference that everyone can read from.

Implications for Decentralized and Autonomous Systems

Decentralized systems — blockchain oracles, federated-learning coordinators, autonomous agent meshes — are the paradigm case of Low Validation Environments. Every participant must treat every other participant as potentially adversarial or buggy. In such systems, the question "what do we all agree is true?" is fundamentally unanswerable through consensus alone, because consensus algorithms themselves depend on the validation assumptions that are failing. A shared physical reference changes the problem: participants do not need to agree on truth through negotiation; they can all read the same physical substrate and derive identical conclusions from identical observations. This is the non-mathematical ZKP architecture Dr. White anticipates as the next stage of decentralized security.