Algorithmic Predictability Vectors

Modern training pipelines consume randomness for many distinct purposes: initialization, dropout, augmentation, masking, mixing curricula, sampling temperatures, beam-search tie-breaking, and Monte Carlo rollouts in RLHF. All of these consumers typically share a single algorithmic randomness substrate. The substrate has finite period, observable lattice structure, and characteristic correlations across split streams.

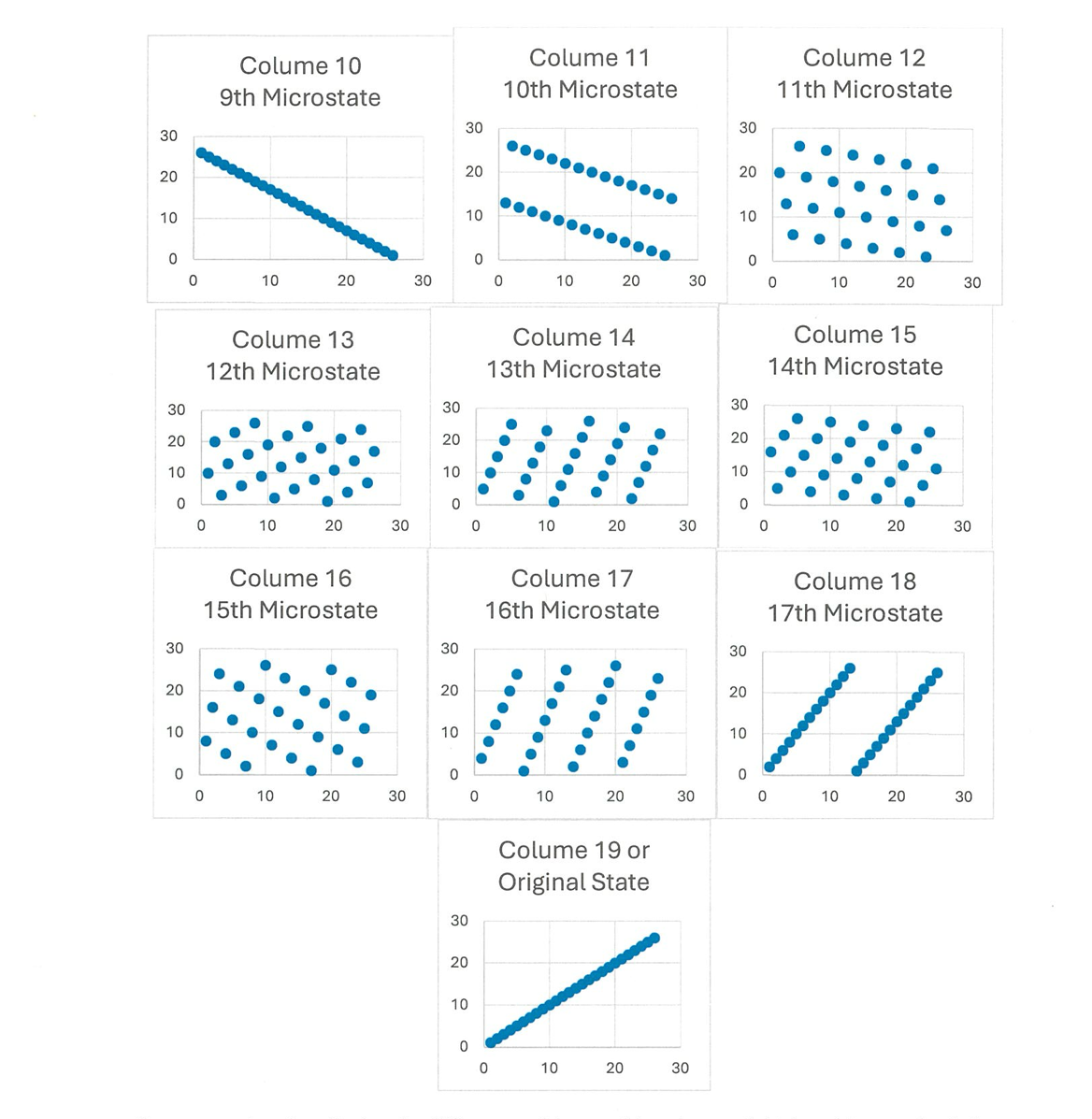

For any single call, the structure is invisible. Across a training run, the structure compounds. Two independent dropout decisions that "should" be uncorrelated share an algebraic relationship determined by the seed and the generator's transition function. Two independent weight initializations in different layers inherit fragments of the same underlying lattice. The network learns these spurious regularities along with the genuine ones.

Stop Feeding Mathematical Simulations to Mathematical Models

The pathology is structural: a deterministic generator cannot escape its own algebraic shape, regardless of how cleverly it is post-processed. The fix is to provide a physical probability cloud rather than a sequence of computed values. ATOFIA injects uncompensated thermodynamic transformations — reconstitution events whose outputs are sampled rather than computed — directly into the AI training fabric.

The change does not eliminate hallucination in the broader sense; data quality, optimization choices, and architectural inductive biases still dominate model behavior. It removes one specific engine of failure: training-time bias that is invisible to per-step diagnostics but reliably reproducible across runs that share a deterministic substrate. With a thermodynamic anchor, two runs are independent in a way two PRNG-seeded runs cannot be.

What Practitioners Notice First

- Reduced run-to-run correlation in long-tail behavior. Failure modes vary across runs rather than reproducing identically.

- Healthier weight-space coverage. Initialization no longer inherits PRNG lattice structure.

- Cleaner ablation studies. Variance attributable to randomness is genuinely random, not algorithmically structured.

Why This Matters for Production AI

For organizations deploying AI in regulated or safety-sensitive contexts, the question of "what is reproducible and what is genuinely variable" matters. A model trained with deterministic randomness has reproducibility in a brittle sense: bug-for-bug identical given the same seed, but with hidden correlations between supposedly independent decisions. A model trained with thermodynamic entropy has the opposite property: genuinely independent stochasticity, with the trade-off that exact bit-reproducibility requires recording the anchor's outputs alongside the seed.